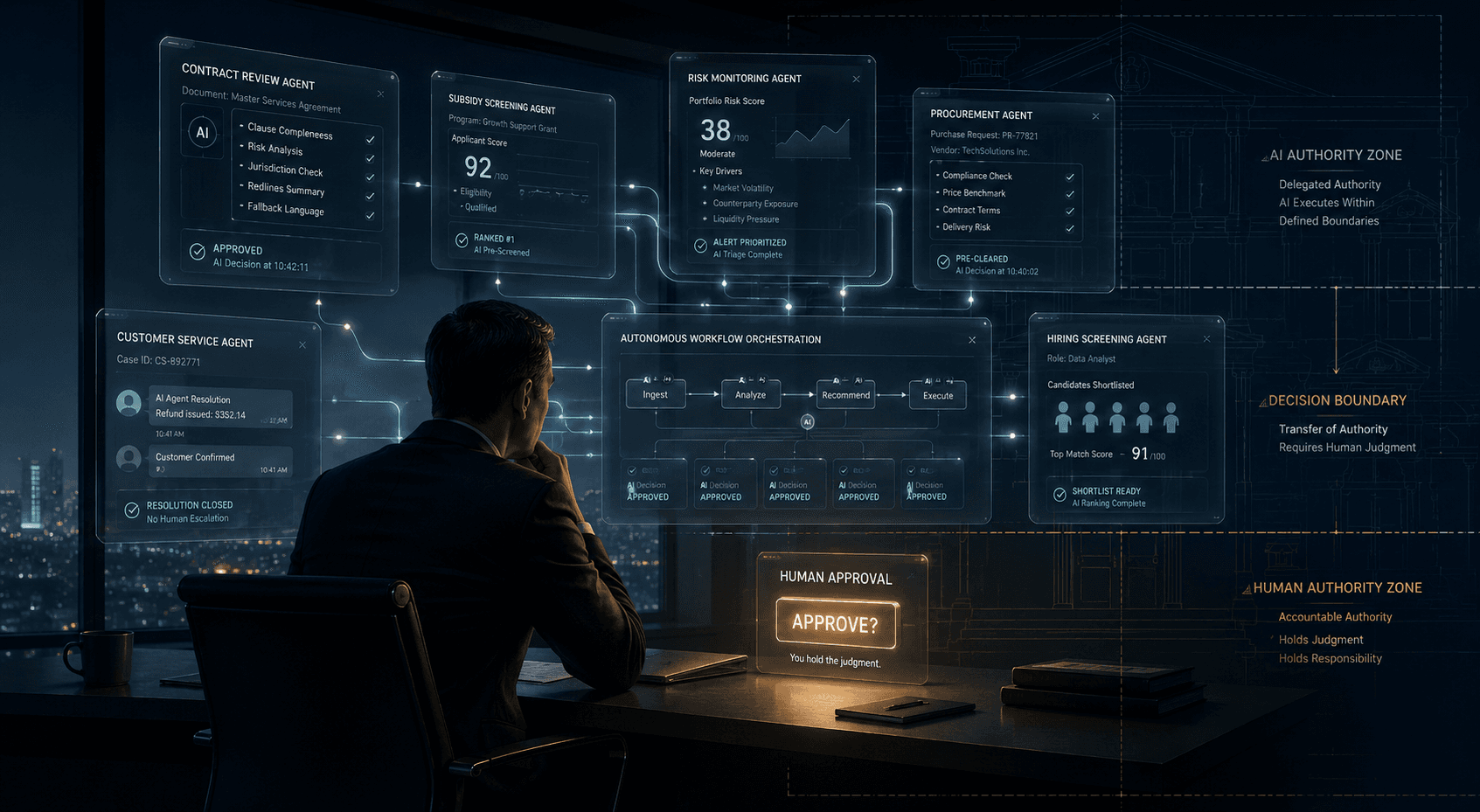

Who Holds Judgment? Decision Design in the Age of the One-Person Firm

As AI agents increasingly execute work before humans review it, enterprises are drifting toward “ceremonial approval” — a condition where procedural oversight survives but substantive judgment disappears. This article argues that the real challenge of AI adoption is no longer automation itself, but the design of institutional judgment authority. Introducing Decision Design, Decision Boundaries, and Decision Logs as a governance architecture for AI-mediated organizations.

%2520as%2520the%2520Next%2520Layer%2520for%2520Agentic%2520AI.png&w=3840&q=75)